StereoFG: Generating Stereo Frames from Centered Feature Stream

Abstract

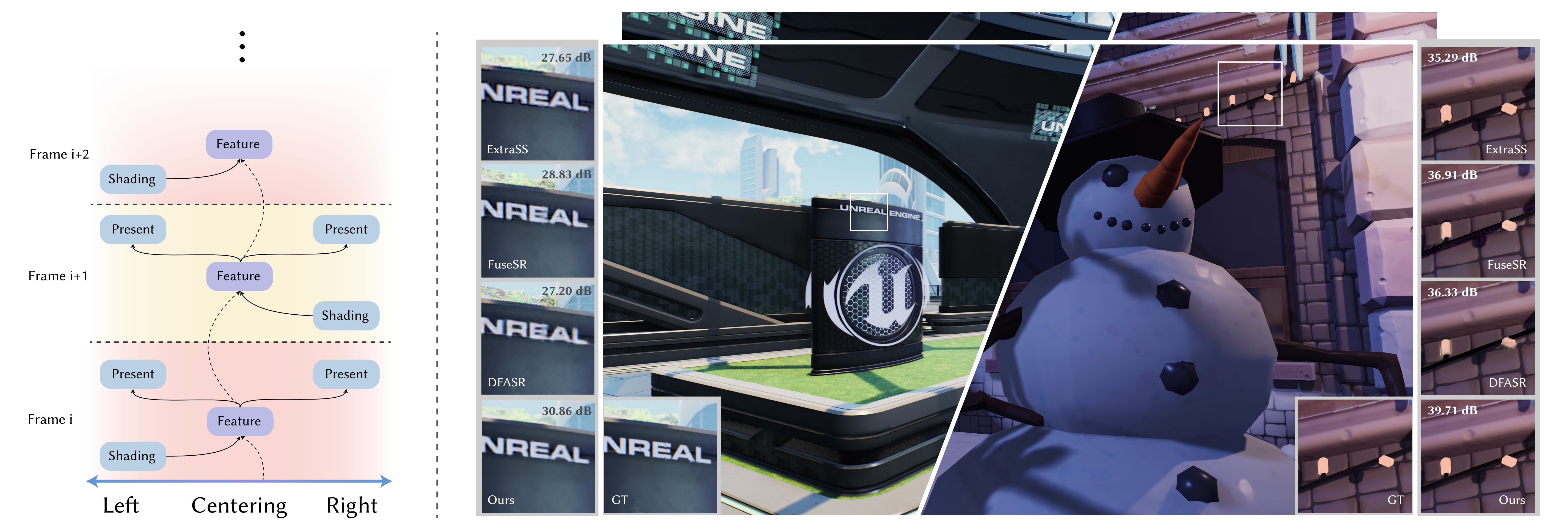

In recent years, the community has seen the emergence of neural-based super-resolution and frame generation techniques. These methods have effectively sped up high-resolution rendering by exploiting the spatial and temporal coherence between sequential frames, but none of them are designed specifically for improving the rendering performance in VR applications, where stereo rendering doubles the rendering cost. To explicitly exploit the binocular coherence between left and right views in VR, we design a centering scheme to align the features from both eyes, so the network can efficiently handle binocular information with superior performance. With this centered feature as the core, we use a novel cyclic network to propagate the centered feature to the next frame to improve temporal stability. Finally, we propose a new multi-frequency composition scheme to robustly blend pixels of various frequencies, generating high-quality images. Our network architecture can effectively utilize both the temporal and cross-view coherence of the stereo rendered results. We thus propose a novel neural frame generation pipeline in which only one view needs to be shaded at low resolution for one frame, and we alternately shade the left and right eyes; the proposed network can generate a quadruple scale high-quality rendering result of both views while delivering superior temporal stability. The experiments demonstrate the effectiveness of our method across a variety of scenarios, including complex lighting variations, intricate aggregate geometries, and multi-object motion.

Results

BibTeX

@inproceedings{zuo2025stereofg,

title={StereoFG: Generating Stereo Frames from Centered Feature Stream},

author={Zuo, Chenyu and Yuan, Yazhen and Wu, Zhizhen and Liu, Zhijian and Lan, Jingzhen and Fu, Ming and Huo, Yuchi and Wang, Rui},

booktitle={Proceedings of the SIGGRAPH Asia 2025 Conference Papers},

pages={1--11},

year={2025}

}